10 Best Practices for A/B Testing Your Email Marketing Campaigns

A/B, or split testing is a strategy that is applicable to almost every discipline of marketing, especially email. It is a great way to determine which variations of a marketing message will improve conversion rates and thus improve your brand’s sales and revenue.

Identifying ways to increase conversion rates by even the slightest percentage can have a significant impact on your bottom line and ROI. The most common mistake email marketers make, however, is becoming comfortable with average, or even good results.

The most effective email marketers are always looking for new ways to improve their strategies and never fall into complacency.

Let’s dive into what A/B testing is, why it’s so important, and look at common best practices to get you started.

What is A/B testing in email marketing?

A/B testing, also known as email split testing or bucket testing, is the process of figuring out which of two campaign options is the most effective in receiving more openings or clicks. This experiment is conducted by sending out two different versions of emails (variant A and variant B) to separate groups in your email list.

It’s important to note that true A/B testing only has one change or element that’s different between variant A and variant B so that you can decipher the impact that specific elements have on email performance.

Why do you need email A/B testing?

With the average person receiving 120+ emails per day, cutting through the noise can be a huge challenge. From subject lines to adding individual names to emails to modals like price decreases, free shipping or personalized predictive product recommendations, A/B testing gives you real data into what’s working and what your customers respond to.

Testing allows your business to understand what your subscribers want while converting more prospects into consumers.

Common email A/B testing variables

There are many different reasons why someone might decide to open your email. Each of these variables is an opportunity to test. Some of the most common email testing variables are:

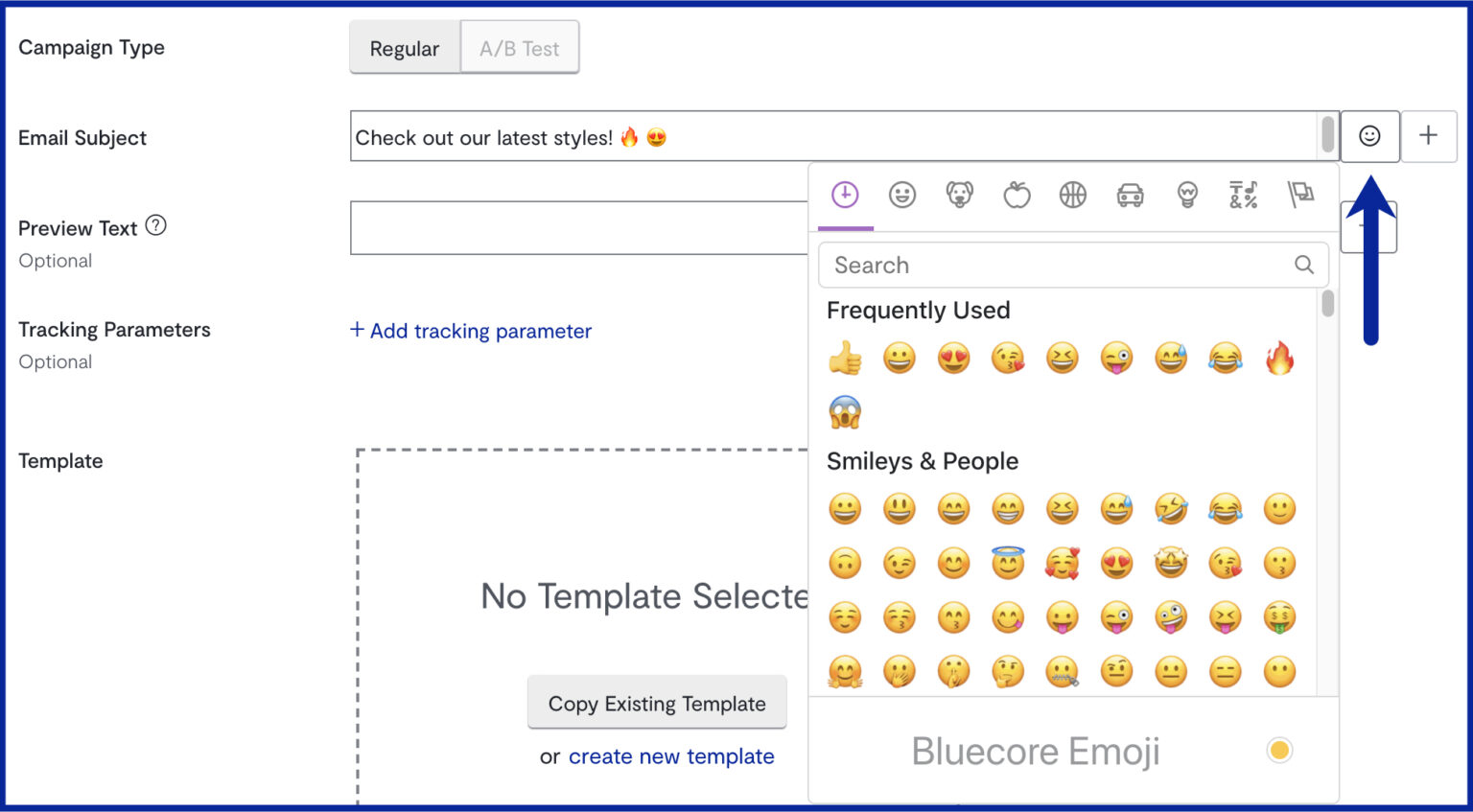

- Subject lines: Subject lines are the first thing a person sees so they can ultimately make or break your strategy. Some things to test include length, casing, emoji use, urgency, and first-person vs. second-person. Don’t hesitate to get creative.

- Personalization: A personalized touch will set you apart from your competitors. Most companies are already incorporating these techniques into their email marketing strategies. A great example of this is Sephora’s “next best purchase” push email that is sent out to recommend products post-purchase.

- Images: A picture is worth a thousand words and is a powerful tool in driving engagement. Common tests include colorful and dynamic vs simple and black and white, animated Gifs and videos vs standard and images of products vs people.

- Call To Action: Whether it’s a discount code or another type of offer, CTAs are common A/B testing variables.

- Timing: All of your customers or subscribers won’t open their email at the same time. They may be located in different states or even different parts of the world. Ensure your email marketing platform is capable of sending emails when each person or segment is most likely to open it.

10 A/B testing best practices for your email campaigns

A/B testing in email marketing can take a lot of time and energy. It’s essentially like performing mini behavioral science experiments on a continuous basis. If you’re just getting started or consider yourself a veteran, here are a few best practices to keep in mind to make testing your email marketing program a little easier:

1. Isolate your test variables

In order to complete a successful A/B test, you should only test one variable at a time. Doing this is the only way to truly determine how effective that variable is.

Let’s say you’re looking to increase clicks. In your single test, you try a few different call-to-action button designs and different images in your email body.

Even if you succeed in seeing an increase in clicks, how will you know what actually drove that behavior? Short answer, you won’t be able to. Isolate variables for every test you run so you can know for certain which variables deliver your results.

2. Always use a control version to test against

A “control” or default is the original version of the email you would have sent anyway, as if you weren’t testing anything. This will provide you with a reliable baseline to compare your results with.

The reason that having a control version is so important, is because there are always “confounding variables” or variables that you can’t control that impact the validity of your test. For example, a confounding variable could be something like one of your email recipients being on vacation without internet access during your test.

By testing against a control version, you are cutting down on as many confounding variables as possible in order to make your results accurate. A control version will also serve as an easy variable to gauge results against. Without a baseline to measure against, it becomes difficult to see the actual lift the test version has driven.

3. Test simultaneously

Timing is everything, especially in ecommerce marketing. Throughout the year, retailers experience seasonal highs and lows. In order to account for any seasonality, changes in your customer behavior or changes to your product catalog, it is best to run your tests in parallel with one another.

Bluecore will take care of this for our customers by splitting audiences and delivering tests randomly.

4. Check if results are statistically significant before declaring a “winner”

Going back to comparing A/B tests to science experiments, you want to make sure the results you’re finding actually mean something before you move forward and implement them into your email marketing strategy.

In statistics, in order for results to mean something, it needs to be “statistically significant.” To determine statistical significance, we use a “p-value.” This represents the probability that random chance or error could explain the result you find.

In general, a 5% or lower p-value is considered to be statistically significant. Depending on the number of emails your triggered email program sends, it will take a few weeks until your results will achieve a p-value of 5% or less.

5. Continuously challenge through new tests

Just about every aspect of an email can be A/B tested for optimization. Get creative with your variables and always be trying to think of new aspects to test.

Take your subject line as an example. Several variables can be tested within that single aspect of an email, such as length, urgency, mention of a promotion or use of the recipient’s name, among others.

Trends and audience behaviors are constantly changing, so it is important for marketers to continuously analyze and adjust strategies in order to succeed. The better you evolve with testing and emailing, the more revenue your business should receive.

6. Test across multiple email clients!

Bluecore enable brands to preview and test functionality on campaigns. View different devices, operating systems, and email clients to see how the emojis will render.

Send a test email to yourself and test across different email clients and browsers. This process can be a little manual, but may help if you’re testing a specific email client.

The more popular emojis may render better across different inboxes. Because they’re being used frequently, their inbox rendering support may be wider

7. Define your audience

You need to understand which audience should receive which email and then split them in a random way. Behavioral data is precious when it comes to choosing the right target audience and helps you create successful tests.

In general, the more specific you are about segmenting the audience you wish to target, the better result you will get.

8. Identify your goals and justify the variation

A/B testing ultimately means some customers will get a less effective email than others. Develop a hypothesis and know your goals. Establishing what metrics you want to concentrate on will help you gauge success. You need to have reason to believe each change will pose a clear benefit – testing shouldn’t be done for the sake of testing.

9. Manage your data properly

Ideally, you should be backing all of your email marketing decisions with data. Unfortunately, depending on how many emails you are sending, tracking your tests and managing data can get out of control. It’s important to choose an email marketing platform that will make your workload easier.

Remember that it’s ok to ask for help.

10. Be patient

It may be tempting to make changes before your campaign is done running but that defeats the purpose. You have to let your tests run until you have statistical significance. Don’t edit live tests!

Let the data flow in and properly store this information for actionable analysis.

Bonus Tip: Ask your audience for feedback

Understand why users are reacting to your content the way they do. Using surveys and conducting polls is a great way to acquire additional important information. The best way to do this is always to ask “why”.

Why did your audience choose to react the way they did? Did they find a 10% discount more appealing than a free shipping offer in a subject line email? Asking these questions and using that information to propel your actions will help set you apart from your competitors.

Other common questions about A/B testing email campaigns

What are the benefits of A/B testing your emails?

- A/B testing helps optimize the performance of email campaigns by allowing you to test different variations of an email to determine which one generates more engagement. Benefits include improved engagement, increased conversion rates, and greater audience insights. Most importantly, A/B testing takes the guesswork out of your email marketing, giving you the data you need to make informed decisions.

What are the disadvantages of A/B testing emails?

- A/B testing is certainly valuable for marketers but it does have it’s disadvantages. Without the right systems and tools in place, A/B testing can be fairly time-consuming. Additionally, without a large enough sample size and enough time given to the experiment, results may not be conclusive or can be incorrect. It’s important to make sure a statistical significance is reached. Another disadvantage of A/B testing is over-optimization. Don’t let the small things distract you from the big picture.

What problems does email A/B testing solve?

- A/B testing is not an end all, be all for marketers, especially with the rise of personalization and SMS. However, A/B testing does solve a range of problems including improving low engagement rates, removing uncertainty, and improving personalization and efficiency.

How accurate is A/B testing?

- A/B testing as a whole can be accurate when done properly. However, the accuracy and data are reliant on factors including sample size, test duration, and consistency. Often marketers get impatient, cutting tests short, changing elements, or assuming a test is over before it’s truly had time to gather the right amount of data. To create an accurate email A/B test it’s important to let the experiment run its course.

Start A/B testing your emails today

Every email you send is a new opportunity to optimize your campaigns. A/B testing your emails can not only have a huge effect on your open rates, but also an impact on your bottom line.